Post testing, backward effects, has largely been the “main course” of the past ten years of retrieval practice. However, there have both been off-shoots; knowledge transfer, errorful generation and elaboration.

For the past three weeks / weekends I have been re-reading about the pretesting, the forward effects of testing. How pretesting “potentiates” learning. How retrieval practice indirectly enhances the subsequent encoding of later tested material. Why getting it wrong can help you get it right and here. And how the University of Florida

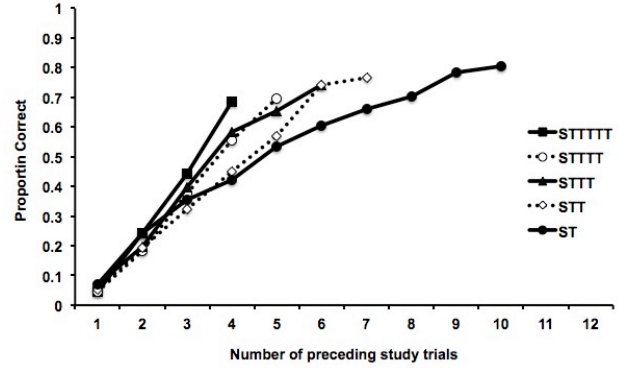

The earliest reference I can find is Izawa, (1966). Her work emerged after asking questions about whether learning could occur during a test. Izawa studied how different patterns of study, test, and neutral trials affected later performance. She generally found that learning on any given study trial was more efficient when more tests had been included in the sequence of previous trials, concluding that neither forgetting nor learning occurred on test trials, but that taking a test could improve the amount of material learned on a subsequent study session. That testing slowed forgetting after the test.

Of course, the most common experience for learners is the test plus feedback condition, in this model, testing usually greatly outpaces the restudy-only condition, even when learners are exposed to material for the same amount of time. What was not confirmed – way how or why?

The benefit of testing probably arises both from the direct effect of testing and from the indirect effect of testing potentiating future learning (potential as a result of feedback). Arnold and McDermott (2012) presented evidence for increased learning efficiency by enhancing subsequent encoding and rapid reacquisition or relearning is a hall mark of Successive Relearning. We are fully aware that knowledge can be quickly and efficiently reactivated – just seven minutes was required to relearn 70 pre-learned word pairs (Vaughn et al, 2016), furthermore,

Relearning had pronounced effects on long-term retention with a relatively minimal cost in terms of additional practice trials.

Rawson et al, (2018)

No doubt, using classroom.remembermore.app daily in class, I can confirm that pupils’ recall efficiency accelerates as success builds motivation, and so we recall more and more cards, in less and less time. Following encoding and relearning, 10 items can be recall almost as fast as they can be read. The Alternative Provision learners would easily and correctly identify 15 Romeo and Juilet characters 30 seconds.

It may well be from this arena of study that the benefits of errorful generation surfaced. The knowledge that:

Unsuccessful retrieval attempts made during prior testing are the driving force behind test-potentiated learning.

Arnold and McDermott (2012)

Hence you can now understand why an interest in pre-testing and errorful generation led me to rexamine potentiation effects. And that lead to Todd et al (2020), given it was both a recent paper, classroom based (almost): “Improving students’ summative knowledge of introductory chemistry through the forward testing effect: examining the role of retrieval practice quizzing.”

Chemistry is tough

The problem: Too many STEM students “fail to complete” the introductory course.

The solution: Retrieval-based study to augment students’ learning of chemistry over an entire semester.

Now, what is not included in the opening summary is the term “interim testing” or interpolated, as this makes the study focused on the forward testing or potentiated learning effects of retrieval (as well as posttesting too).

Methodology

Prior knowledge – precalculus maths. 1 instructor, two class periods per week (noted as this defines the spacing also). 12 self assessed quizzes (eg Dimensional, Properties, Isotopes, Naming, Moles) – with students encouraged to re-work the exam questions they had answered incorrectly.

Results

Nearly 80% of the class opted to be part of the quizzing system, and student participation levels were exceptionally high.

Participants were categorised as high, moderate, or low participation groups.

- Low: less than 50% or fewer of the 12 retrieval quizzes (n = 35).

- Moderate: more than 50% but fewer than 75% of the quizzes (n = 31).

- High: 75% or more of the 12 retrieval quizzes (n = 80).

In a nutshell – what Todd et al, (2020) termed the “Retrieval Practice Quiz system” provided a forward testing effect. Students who engaged in more than half of the online quizzes saw significant learning benefits on their cumulative final exam.

Improving students’ summative knowledge of introductory chemistry, in a natural classroom setting, through the forward testing effect led to a 7–8 points on a 100-point cumulative final exam, slightly less than a full letter grade improvement on the final exam. Yet the moderate group frequently out performed the high group? Again Todd et al, (2020) note limitations in the power of the study.

Why did this model of interpolated or interim testing work?

Students reexamining and reassessing exam materials allowed the studentd to both correct misunderstandings about course material and strengthen the retention of exam material, which are both vital to success in future courses. The retrieval quizzes “offered students an additional, low-stress assessment after each during-term exam and prior to the cumulative final” – although I am not sure what point Todd et al, (2020) are making here exactly.

Consequently, their conclusion: retrieval practice may be a more efficient solution to the issue of STEM attrition than providing supplemental courses.

I do not think that this study offers a startling revelation or headline. “Students that test, do better than students that don’t.” As Todd et al, (2020) acknowledge, the results could easily be attributed to time spent on task with students in this business-as-usual condition simply not engaging in any other study activities.

Final point: Why the cocktail party image. I often think of potentiated test effects much like the better known cocktail party effect. Where, within the noise of a lesson, your attention is perked by a particular reference or knowledge nugget that you bumped into in the pretest. That and the cocktails – chemistry link.

Arnold, K. M., & McDermott, K. B. (2013). Test-potentiated learning: distinguishing between direct and indirect effects of tests. Journal of experimental psychology. Learning, memory, and cognition, 39(3), 940–945. https://doi.org/10.1037/a0029199

Arnold, Kathleen Marie, “The Enhancing Effect of Retrieval on Subsequent Encoding: Understanding Test-Potentiated Learning” (2013). Arts & Sciences Electronic Theses and Dissertations. 1027.

Izawa, C. (1966). Reinforcement-test sequences in paired-associate learning. Psychological Reports, 18, 879–919.

Izawa, C. (1971). The test trial potentiating model. Journal of Mathematical Psychology, 8, 200–224.

Rawson, K. A., Vaughn, K. E., Walsh, M., & Dunlosky, J. (2018). Investigating and explaining the effects of successive relearning on long-term retention. Journal of Experimental Psychology: Applied, 24(1), 57±71. https://doi.org/10.1037/xap0000146

Vaughn, K. E., Dunlosky, J., & Rawson, K. A. (2016). Effects of successive relearning on recall: Does relearning override the effects of initial learning criterion?. Memory & cognition, 44(6), 897–909. https://doi.org/10.3758/s13421-016-0606-y

Pingback: Reduce Mind wandering – Edventures