I am not rushing to the defence of the exam boards, the perennial “results day” articles* rarely fail to direct some meagre attack or critique towards them. This year, the exam boards have put forward their own infomercials, here and here, to reassurance their various audiences. Furthermore, this year the spectre of yet more reform looms as Nick Gibb’s criticisms echo down the empty corridors of school devoid of exhausted teachers, still getting to grips with the new GCSE’s and A Levels, awaiting the 2016 GCSEs. Ofqual declined to comment. (Only this year they have started early.*)

Whilst I accept it may not be prudent to have four exam boards all completing the these hefty requirements, doesn’t the open market encourage quality? Certainly the requirements of Ofquals qualification and subject conditions are rigorous. Ofqual assurance processes can only be described as significant. I do not see a race to the bottom? Likewise, whilst I agree with Brian Lightman’s comment that

… an over-reliance on external testing and a low-trust culture risks driving the curriculum into the straitjacket of what can be assessed in a written test.

Assurance is more appropriate than rigour

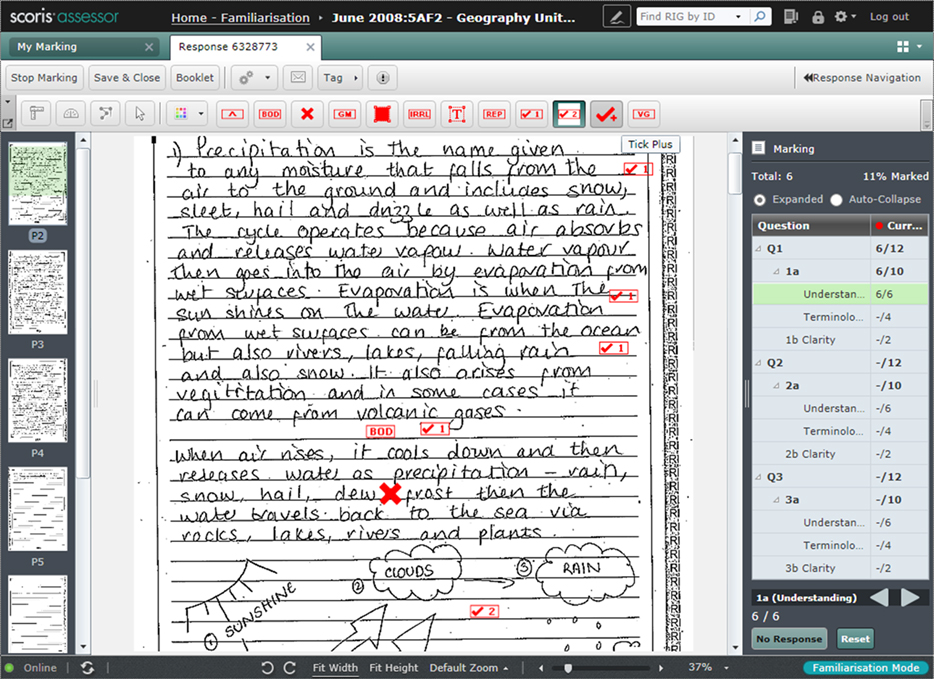

Not only can expect the exam boards to have meet the rigorous precise Ofqual GCSE conditions they have also been expected to constructed an extensive and thorough Assessment Strategy. Whilst many standardisation processes have changed little, one area has evolved rapidly in recent years – marking, or rather e-marking. Why should e-marking encourage confidence, confidence that at least the mark awarded is accurate (we are not discussing the quality of the specification, question paper or mark scheme).

Scripts are prepared, cut cleanly ready for scanning. Processed at approximately 3 pages per second, 10,000 images per hour, both sides of the script simultaneously, bi-tonal and/or colour. Bar codes and quality control checks ensure the completed script has been scanned. At this point, the scripts are indexed and ready for marking. I say ready for marking, by this point the multiple- choice tests can be marked. The original paper scripts are then reassembled and stored. incidentally, it is not just test papers that can be shared via onscreen marking packages. Video submissions, practical or performance tests, such drama or dance performances can be viewed and e-marked.

Speed and availability of the scripts are the most obvious benefits, with examiners and supervisors able to review the same script/response simultaneously and raise and respond to issues / questions immediately. This real-time quality assurance enabling continual improvement rather than the post-hoc adjustment. Workflow management and efficiencies, candidate anonymity, accurate totalising, additional benefits.

Perhaps the most powerful benefit is Item Marking where one marker is assigned a specific question or section which responds to his/her particular area of expertise, and marks all candidate responses to that question or section only. A more accurate approach, as long as examiner standardisation minimises systematic marking biases.

Worthwhile know that, that Ofqual Annual Report reported that of the 77,400 grades challenged, 18.7% changed. That is the equivalent of 1% of all grades issued. There is a lot more I would like to know about this information. Which qualification, which subjects, and what was the amendment. Perhaps if schools we more familiar with the assurance procedures in place, or maybe if an exam board wanted to reuse / refresh this post and share it with key staff (typically SLT exams leads and curriculum leaders) then schools might have greater confidence in the system.

[qr_code_display]